MULTIX to showcase their innovations at EuCNC 2026

Join us at our booth featuring posters, videos, demos, and more!

We are pleased to announce the participation of MULTI-X at EuCNC 2026, the premier European conference on networks and communications. Scheduled to take place in Málaga, Spain, from June 2nd to June 5th, 2026, this event serves as a platform for showcasing advancements in the field of telecommunications and bring together the entire 6G community in Europe. MULTI-X will present their progress with a booth titled: “MULTIX: Advancing 6G-RAN through multi-technology, multi-sensor fusion, multi-band and multi-static perception”

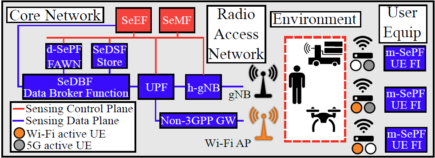

MULTI-X is a pioneering research initiative focused on developing a comprehensive framework for Integrated Sensing and Communications (ISAC). By treating the network as a high-resolution sensor, the project successfully bridges 5G and Wi-Fi technologies into a single, unified Multi-RAT (Radio Access Technology) system. Through the integration of advanced artificial intelligence, industrial-grade hardware, and high-fidelity digital twins, MULTIX delivers unparalleled situational awareness and democratizes access to granular network data for next-generation research.

The MULTI-X Testbed: A Unified ISAC Framework

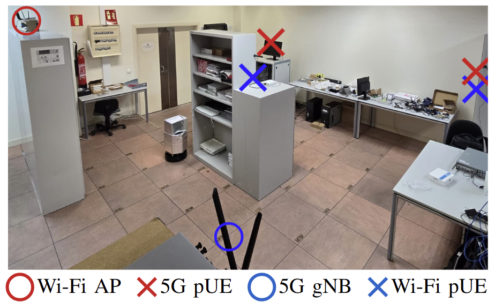

Our testbed demonstrates a fully controllable, end-to-end 5G environment that fuses open-source software with industrial-grade hardware to create a highly repeatable proxy for real-world measurements. Key features of the testbed include:

Key Innovations

Non-Line-of-Sight Awareness

Traditional robots are "blind" to what lies behind a closed door or around a sharp turn. By integrating 5G and Wi-Fi passive sensing, this Digital Twin can visualize "ghost" entities, enabling the robot to preemptively adjust its path before an actual physical encounter occurs.

Hardware-in-the-Loop Integration

The synchronization between the USRPs and the Isaac Sim environment allows for a bi-directional flow of data. The Digital Twin isn't just a mirror: it's a predictive tool that uses Multiband Receivers to run various inference models, constantly validating the robot’s real-world state against the simulated expectations.

Advanced Beam Training for Sensing

The use of mmWave technology transcends simple data streaming. By reusing the communication overhead (beam training), the system extracts "free" sensing data. This directional sensing is particularly effective in constrained environments like corridors, providing a high-resolution radar effect using the existing video uplink hardware.

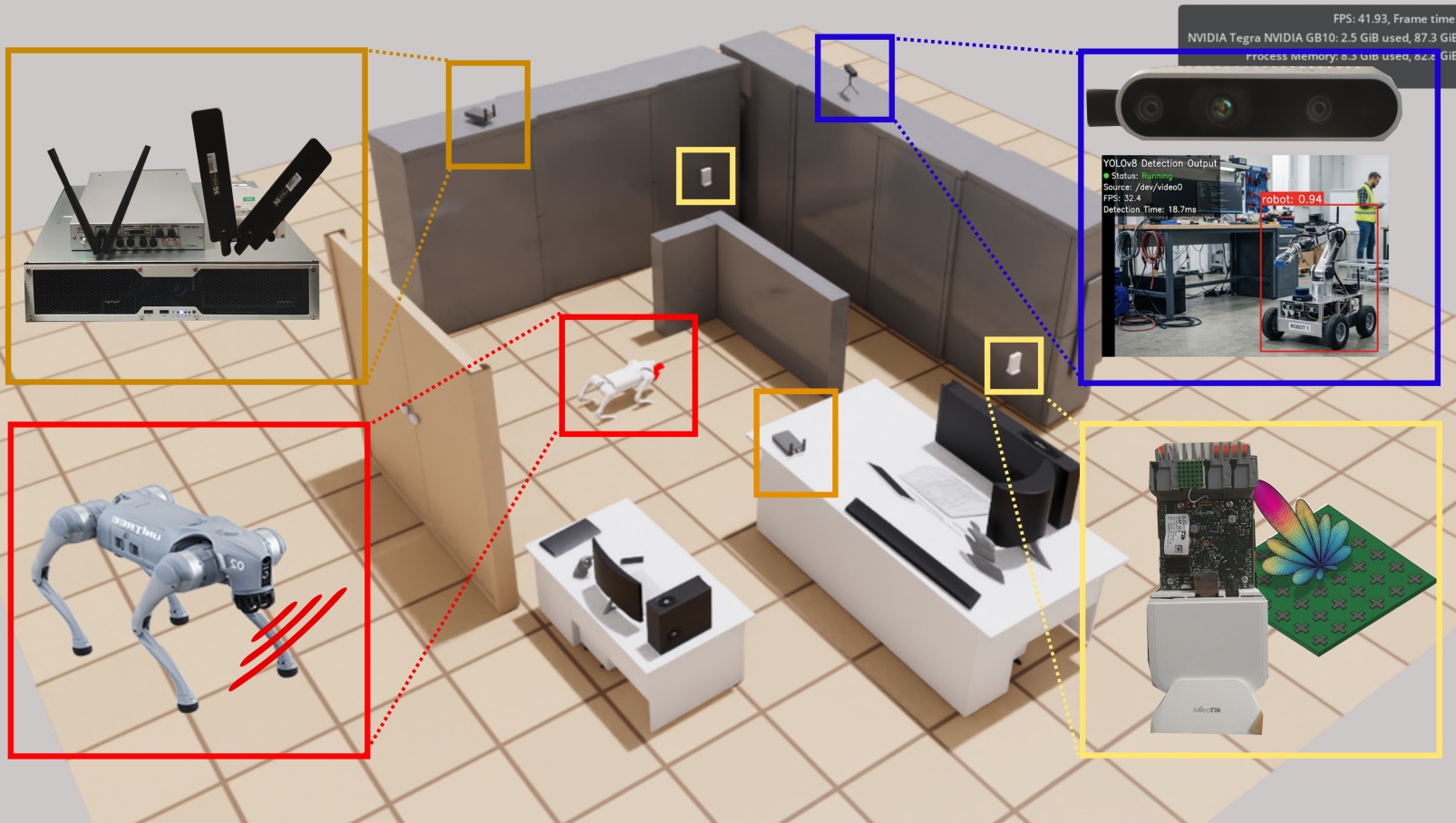

EuCNC 2026 Live Demo: Multi-Modal Robotic Digital TwinS

At our booth, we are showcasing a high-fidelity Digital Twin integrated within NVIDIA Isaac Sim. This interactive demonstration bridges physical robotic maneuvers with a virtual environment by fusing traditional onboard perception with cutting-edge wireless sensing technologies.

The Core Components

Mobile Platform

The Unitree Go2 quadruped serves as the primary mobile agent. It utilizes high-frequency LiDAR for 360° spatial mapping and Odometry for precise localization. These sensors allow the Digital Twin to track the dog’s position and map Line-of-Sight (LoS) obstacles in real-time with millimetric accuracy.

Visual Intelligence

The onboard camera stream is processed via a YOLOv8 model to perform real-time target recognition. This visual metadata is offloaded to the NVIDIA Spark framework, which integrates the identified objects into the Isaac Sim environment, allowing the Digital Twin to "see" and categorize specific entities in the room.

Wireless Sensing

Using USRP hardware, the system implements multiband receivers to perform passive sensing. This allows to detect movement and environmental changes without a direct visual feed. The 5G + Wi-Fi infer activity behind walls or around corners by analyzing signal disturbances. The mmWave Antennae leverage highly directional beams in corridor environments.